- #Duplicacy wasabi retention policy password#

- #Duplicacy wasabi retention policy download#

- #Duplicacy wasabi retention policy free#

In-case there are backwards compatibility problems in future. (optional, but ideally) Duplicacy version used to create the backup

You got this when you created the access key in Wasabi, but only you have this now, you can’t recover it from Wasabi later.

#Duplicacy wasabi retention policy password#

Store it all in a good password manager like KeePass, but store the KeePass database file somewhere else!Ĭan be seen in Wasabi web portal as a filename if you know the bucket name.Ĭan be seen in Wasabi web portal, if you know which Wasabi access key you used. If your local storage crashes you will need the following information to restore from the cloud backup. your local storage crashes before you fix the cloud storage problem), then your backup problem with a local copy becomes a restore problem with an inconsistent/incomplete copy which is much harder to solve, and you probably permanently lost data. Timing is of the essence, because backup problems are easier to fix if you still have a local copy of the data. First you have to know about it, then act straight away. Sometimes the backup or check, or prune might halt with an error. Please see the duplicacy-backup script in this repository for an example of a cron script that can be used. Prune backups (delete old snapshots from cloud storage) to save cloud storage costs in case you delete/modify files locally. Note: This will catch deletion of a whole chunk from cloud storage, but not "bit rot", i.e. The cloud may have lost them or you might have accidently deleted them by running prune and backup at the same time towards the same cloud storage (best not to do that). Run backups (create snapshots) to capture changes to local storage.Ĭheck backups to see if all the expected chunks can be seen on cloud storage.

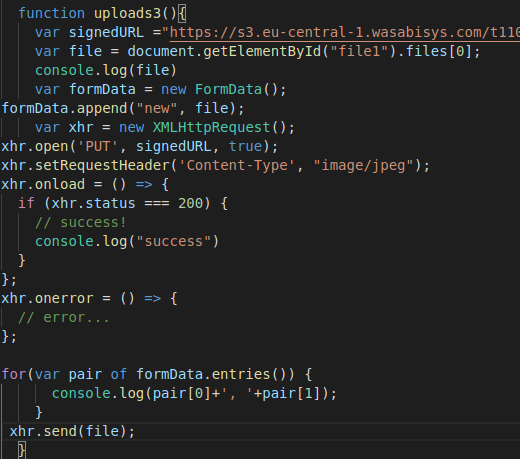

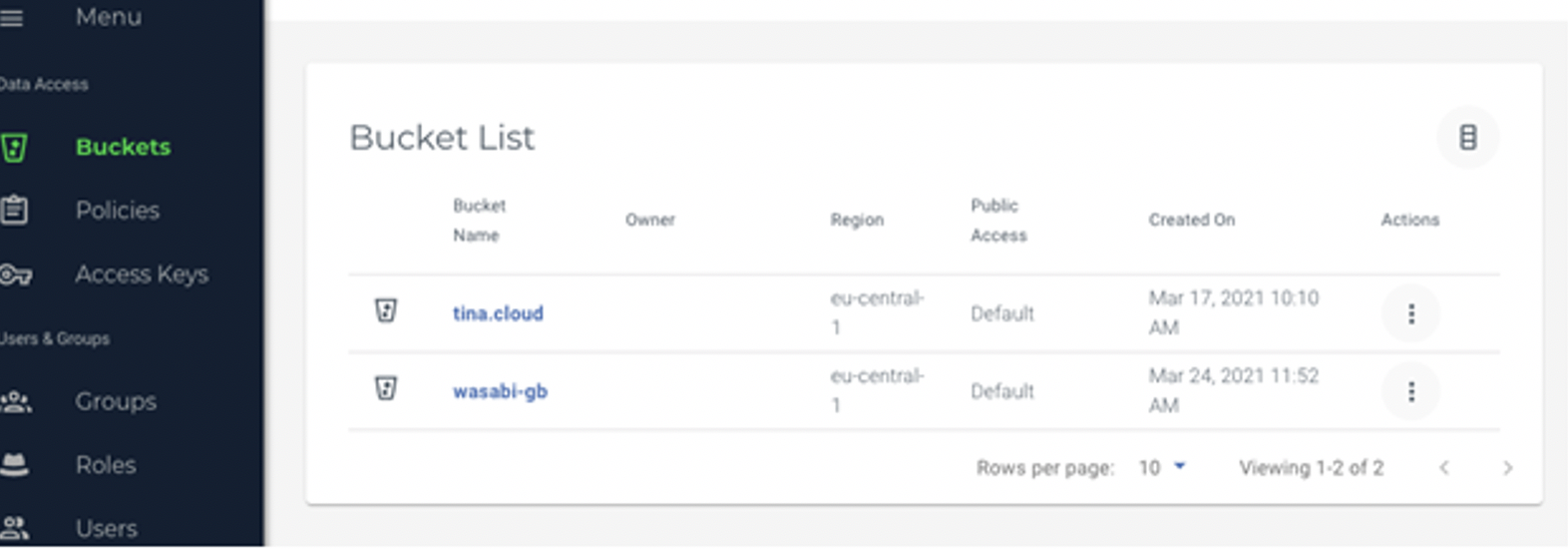

via cron job) so you should do this once-off manually and not via your cron job. This might take a long time, and must complete before any subsequent backups are run (e.g. Initialize duplicacy repository by running a command on local linux server towards remote wasabi backend. A good password manager database like KeePass, stored somewhere else. In this case we are assuming it is a linux server of some sort, with access to the internet.Ĭreate wasabi account, storage bucket (give it a name), and access keys (save these for later)ĭownload duplicacy binary to your linux server, so it is accessible from $PATH (for convenience).Ĭreate a safe place to store key information for when your local storage has crashed and you need to restore from the cloud backup. ().Assuming you already have some local storage solution setup. If I were in your shoes, I would have chosen to handle the backups with Restic. In my own tests, Restic had problems with >1TB repositories, but that was years ago, so it may have changed. See comments Restic for a more modern approach that supports a multitude of backends. I'm finally one of you guys! Thanks for helping get this far.Restic tags? It's a backup program? Ah, no, it's an SSG. Was expecing a test runner, but it's an SGG(?).

#Duplicacy wasabi retention policy free#

K8up is built around and it is free open source software. It is very GitOps friendly and user can define their backups without any need for admin privileges. I would like to this in yet another option. Add a bunch of CACHEDIR.TAG files to big dirs I don't want to back up, then just run it regularly, or could set via some type of cron. Since the only thing I care about is my homedir, I just use restic + s3. You can use Arq and roll your own backup to Backblaze B2 or Wasabi (examples). If your friend can venture up from $7 a month, it will open a lot of doors. I believe there are two different vendors providing connectivity to our town, and you can pick between. I have very little power over internet connection speed, and there are very few alternatives here.

#Duplicacy wasabi retention policy download#

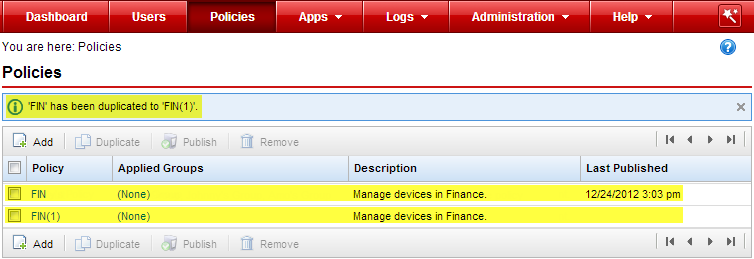

It would take me a week or more to download a terabyte of data. I use a cloud storage provider to back up via Arq But I don't expect to restore more than a few gigabytes at a time from that. Use HB first (which you cannot) OR use the bucket's direct policy. I think what it's saying is, don't use a policy with HB and with the bucket. In your case, I don't think you can set a retention policy with HB. Hyperbackup : did we ever arrive on a final solution for retention? If rue use case is only for backups, check out. If I heavily encrypt all my files with something like cryptomator or veracrypt before uploading them to a cloud storage service, does it matter what cloud storage provider we are using ?

I'm using Arq to send data up to Storj on my / other's machines. If it helps, my node is in a datacenter, not in my home/SOHO. I use Storj and host a node (I get it, might not be what you're after).